By Anna Nadibaidze and Robin Vanderborght

The public dispute that erupted recently between the US Department of War (formerly Department of Defense) and the technology company Anthropic has captured the attention of global media and experts. Anthropic has developed the popular large-language model (LLM) Claude, which is not only used by private consumers worldwide, but also by US government agencies and the US military.

In a closed-door meeting last week, Secretary of War Pete Hegseth reportedly issued an ultimatum for Anthropic to abandon its so-called ‘red lines’ regarding the employment of its AI models in warfare. These red lines prohibit the use of its products such as Claude for 1) domestic mass-surveillance and 2) fully autonomous weapon systems—which select and engage targets without any human oversight. All other use-cases, CEO Dario Amodei confirmed in a CBS interview, would be allowed.

Anthropic refused to abandon its red lines and concede to the Pentagon’s ultimatum, and in response, President Trump ordered its government agencies to “immediately” stop using products provided by Anthropic. Simultaneously, Pete Hegseth posted on X, the social media platform owned by Elon Musk, that he designated the firm as a supply-chain risk to US national security.

This also means that companies working with the US military are banned from working with Anthropic. Given that Claude is deeply embedded into the US government apparatus, a six-month phase-out period for federal agencies and the US military is now in force. Anthropic said that it will sue the US government.

The US military’s ultimatum for a private company to abandon its restrictions on the employment of its AI models in the use of force is symptomatic of several trends in the development and governance of military applications of AI. In this blog, we highlight three takeaways from this clash.

1. The military use of AI is shaped by dynamics between governments and the private sector

A substantial part of the international community is grappling with how military applications of AI can be designed and employed in a responsible manner, for example during high-level conferences like the Responsible AI in the Military Domain (REAIM) summits. But while governance remains fragmented, and while operationalised norms on ‘Responsible AI’ are missing, the use of military applications of AI is determined by government officials and company executives.

We have shown in other places that technology companies are prominent players shaping perceptions on and possibilities of military uses of AI. They do so by spreading particular narratives and hypes surrounding AI technologies, by developing and designing products in specific and permissive ways, and by claiming epistemic authority over the future—and specifically, over the future of warfare.

Anthropic, for instance, has now denied the Pentagon access to Claude for mass-surveillance of US citizens and for fully autonomous weapons systems, but has earlier given broad permissions with regards to all other use-cases, for example the employment of Claude in AI-based decision-support systems (AI DSS) as part of the use of force. It also cooperates with other technology companies, such as Palantir and Amazon, to provide its LLM models to the US military.

Handing over decisions about war and violence to a handful of government officials (without Congressional oversight, in this case) and technology entrepreneurs, which is effectively what is happening here, is not only problematic for democratic states. This trend also raises questions about the increased power of the commercial technology sector and the venture capitalists that back these companies.

2. The US has its own interpretation of ‘responsible’ integration and use of AI in warfare

The insistence of Secretary Hegseth to be allowed the use of Anthropic’s models without any restrictions is a clear indication of how the current Trump administration is reinterpreting what it means to act ‘responsibly’ when it comes to military AI. The US is turning away from the international ‘Responsible AI’ agenda and the principles typically associated with it, such as ethics, safety, and accountability.

The Biden administration emphasized the necessity of setting norms and limitations on specific military uses of AI, even if non-legally binding. It, for example, promoted the 2023 US Political Declaration on Responsible Use of AI and Autonomy. The current administration has shifted towards adopting AI as extensively as possible—or, in Secretary Hegseth’s own words, “accelerate like hell”. Hegseth’s approach to becoming an “AI-first-department”, which he says is about using AI “without ideological constraints that limit lawful military applications”, is essentially about normalizing unlimited integration and use of AI in the military, and characterizing this process as “lawful”.

The US now understands ‘using AI everywhere in defence’ as the responsible thing to do. This is also visible in international contexts, as the US is distancing itself from ‘Responsible AI’ global governance debates. For example, the US did not endorse the declaration after the 2026 REAIM summit, where its delegation played a minor role.

As argued previously on the AutoNorms blog, the inherent vagueness of the term ‘Responsible AI’ allows actors to reinterpret its meaning in a variety of ways, including in the sense that integrating AI everywhere is the responsible thing to do. And it is precisely this ambiguity that contributes to the further fragmentation of approaches to global governance in this field.

3. There is (still) too much focus on fully autonomous weapons while AI is already part of the kill chain

At first glance, the ‘red lines’ issued by Anthropic (and those of other companies such as OpenAI) appear to be sensible as they match two important AI ethics principles: data privacy and human oversight over critical decisions such as the use of force.

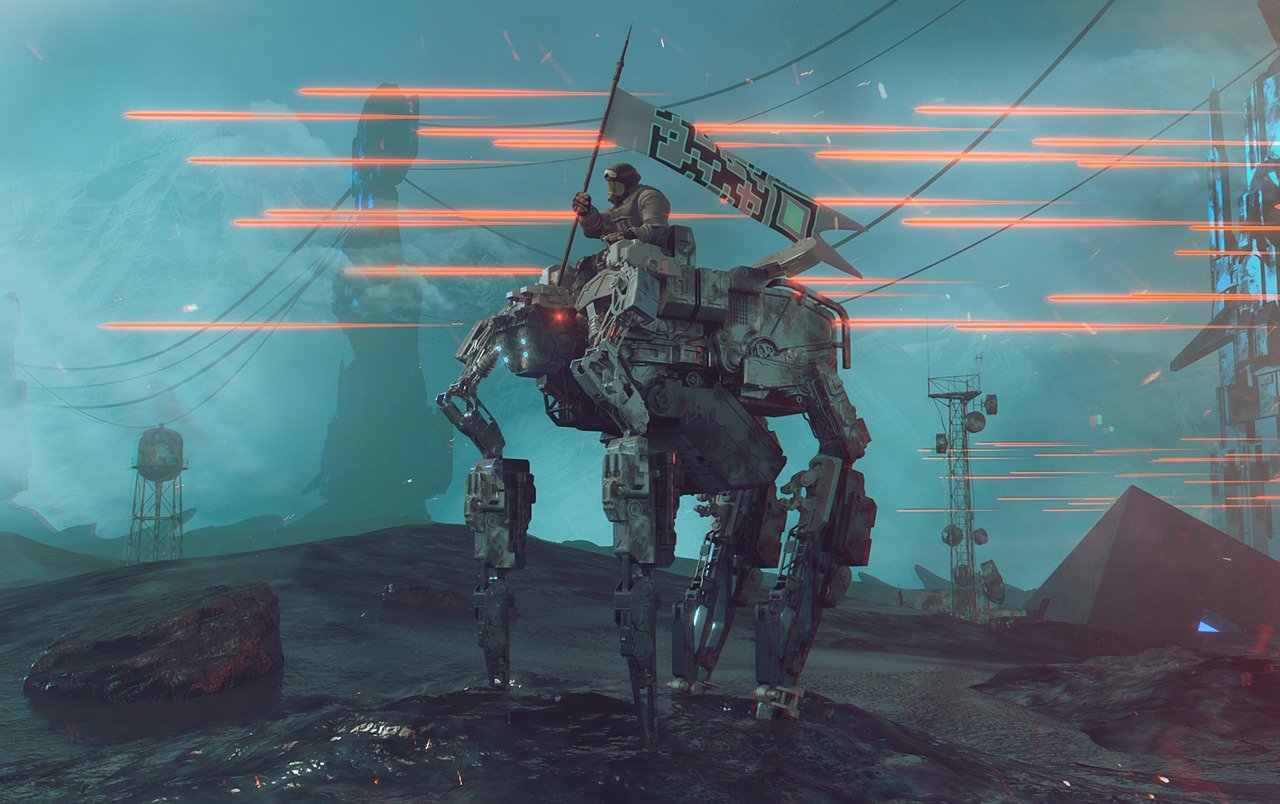

But the singular focus on fully autonomous weapon systems does not capture the many other concerns surrounding AI in the military domain. The central issue today is not about AI systems completely replacing humans in military operations. Warning for an army of autonomous robots roaming the battlefield, selecting and engaging targets as it pleases, distracts from the very real effects of AI adoption that we see today. Serious concerns relate to how humans exercise their agency while interacting with AI-enabled systems and engaging in various tasks that may directly or indirectly be part of the use of force, for example when employing LLMs like Claude in AI DSS.

Recent reporting has uncovered that Anthropic’s Claude was used as a part of the US military operation in Venezuela in January 2026. It is also reportedly used in the ongoing US and Israeli war on Iran. While limited information is available regarding the precise uses of Claude in these operations, it would be fair to suppose that the LLM was employed for tasks that support military personnel in planning and targeting decisions, such as simulations, intelligence analysis, or target identification.

This is facilitated through the partnership with Palantir, which enables Claude’s integration in the command and control Maven Smart System platform that is used by the US military and NATO. According to reports, the US military employed Maven together with Claude to plan operations in Iran, “suggest hundreds of targets” and “prioritize these targets according to importance”.

We should not assume that these systems can (or will) replace humans altogether. There is human oversight, as, in theory, with all AI DSS. Rather, the question then becomes whether and how the use of LLMs such as Claude will affect humans’ ability to exercise a sufficient level of judgement that is needed for strategic, legal, and ethical decision-making.

For instance, we may ask whether humans have sufficient conditions to cross-check the outputs of LLMs—which would be necessary, considering the rate at which LLMs provide factually wrong outputs. These questions need to be foregrounded in the debates on AI in warfare, rather than the dystopian imaginary of fully autonomous drones roaming the battlefield looking for an easy—and unauthorized—kill. Thus, while Dario Amodei argues that AI models are not reliable enough (yet) to be used in fully autonomous weapons, we currently see the US military employing these tools as part of targeting, but not in so-called ‘killer robots’.

In conclusion, the feud between Anthropic and the Pentagon exposes important issues: What role should technology companies have in warfare? How can AI technologies be employed in military operations in a responsible manner—if such a thing is possible in the first place? How do major military powers imagine the role of AI technologies in warfare? These questions have been central in the ongoing discussions on military uses of AI, and the events of the past week demonstrate once again the urgency to provide convincing answers.

About the authors

Anna Nadibaidze is a postdoctoral researcher at the Center for War Studies, University of Southern Denmark.

Robin Vanderborght is a postdoctoral researcher in International Relations at the University of Antwerp. He is currently working on a project that examines the impact of European technology startups on the norms and conduct of (algorithmic) warfare.